您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

這篇文章主要講解了“hadoop網站日志舉例分析”,文中的講解內容簡單清晰,易于學習與理解,下面請大家跟著小編的思路慢慢深入,一起來研究和學習“hadoop網站日志舉例分析”吧!

一、項目要求

日志處理方法中的日志,僅指Web日志。其實并沒有精確的定義,可能包括但不限于各種前端Web服務器——apache、lighttpd、nginx、tomcat等產生的用戶訪問日志,以及各種Web應用程序自己輸出的日志。

二、需求分析: KPI指標設計

PV(PageView): 頁面訪問量統計

IP: 頁面獨立IP的訪問量統計

Time: 用戶每小時PV的統計

Source: 用戶來源域名的統計

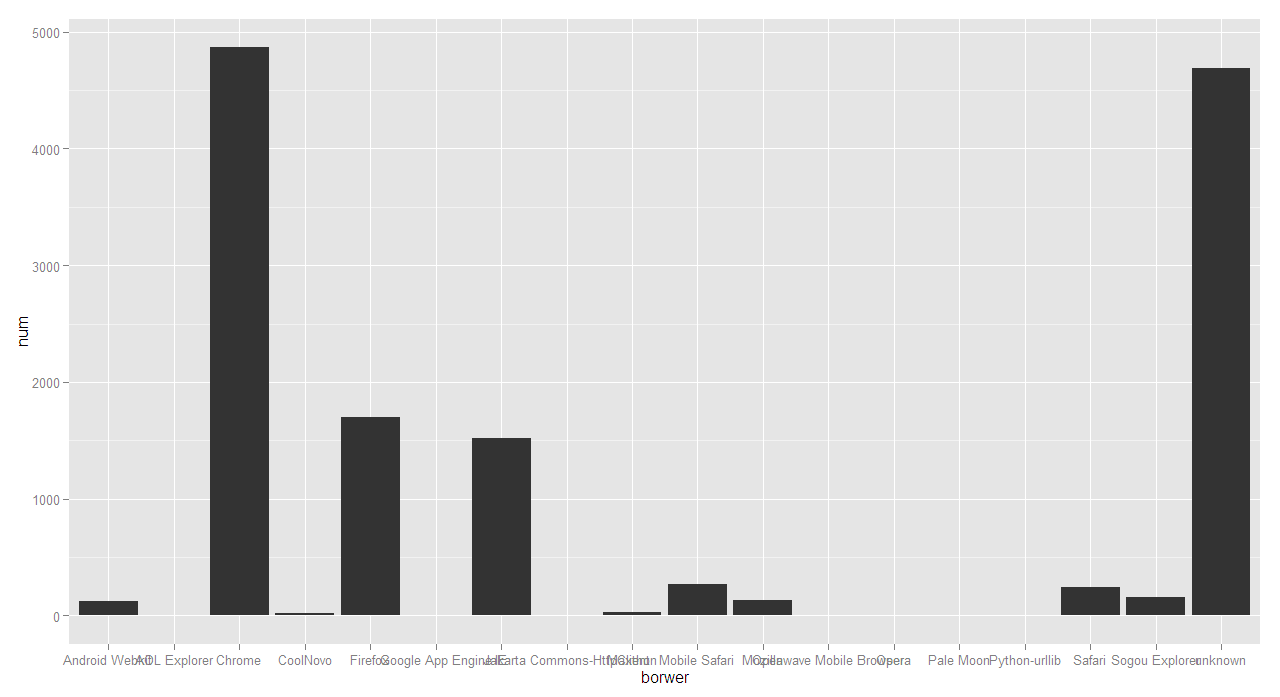

Browser: 用戶的訪問設備統計

下面我著重分析瀏覽器統計

三、分析過程

1、 日志的一條nginx記錄內容

222.68.172.190 - - [18/Sep/2013:06:49:57 +0000] "GET /images/my.jpg HTTP/1.1" 200 19939

"http://www.angularjs.cn/A00n"

"Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/29.0.1547.66 Safari/537.36"

2、對上面的日志記錄進行分析

remote_addr : 記錄客戶端的ip地址, 222.68.172.190

remote_user : 記錄客戶端用戶名稱, –

time_local: 記錄訪問時間與時區, [18/Sep/2013:06:49:57 +0000]

request: 記錄請求的url與http協議, “GET /images/my.jpg HTTP/1.1″

status: 記錄請求狀態,成功是200, 200

body_bytes_sent: 記錄發送給客戶端文件主體內容大小, 19939

http_referer: 用來記錄從那個頁面鏈接訪問過來的, “http://www.angularjs.cn/A00n”

http_user_agent: 記錄客戶瀏覽器的相關信息, “Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/29.0.1547.66 Safari/537.36″

3、java語言分析上面一條日志記錄(使用空格切分)

String line = "222.68.172.190 - - [18/Sep/2013:06:49:57 +0000] \"GET /images/my.jpg HTTP/1.1\" 200 19939 \"http://www.angularjs.cn/A00n\" \"Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/29.0.1547.66 Safari/537.36\"";

String[] elementList = line.split(" ");

for(int i=0;i<elementList.length;i++){

System.out.println(i+" : "+elementList[i]);

}測試結果:

0 : 222.68.172.190 1 : - 2 : - 3 : [18/Sep/2013:06:49:57 4 : +0000] 5 : "GET 6 : /images/my.jpg 7 : HTTP/1.1" 8 : 200 9 : 19939 10 : "http://www.angularjs.cn/A00n" 11 : "Mozilla/5.0 12 : (Windows 13 : NT 14 : 6.1) 15 : AppleWebKit/537.36 16 : (KHTML, 17 : like 18 : Gecko) 19 : Chrome/29.0.1547.66 20 : Safari/537.36"

4、實體Kpi類的代碼:

public class Kpi {

private String remote_addr;// 記錄客戶端的ip地址

private String remote_user;// 記錄客戶端用戶名稱,忽略屬性"-"

private String time_local;// 記錄訪問時間與時區

private String request;// 記錄請求的url與http協議

private String status;// 記錄請求狀態;成功是200

private String body_bytes_sent;// 記錄發送給客戶端文件主體內容大小

private String http_referer;// 用來記錄從那個頁面鏈接訪問過來的

private String http_user_agent;// 記錄客戶瀏覽器的相關信息

private String method;//請求方法 get post

private String http_version; //http版本

public String getMethod() {

return method;

}

public void setMethod(String method) {

this.method = method;

}

public String getHttp_version() {

return http_version;

}

public void setHttp_version(String http_version) {

this.http_version = http_version;

}

public String getRemote_addr() {

return remote_addr;

}

public void setRemote_addr(String remote_addr) {

this.remote_addr = remote_addr;

}

public String getRemote_user() {

return remote_user;

}

public void setRemote_user(String remote_user) {

this.remote_user = remote_user;

}

public String getTime_local() {

return time_local;

}

public void setTime_local(String time_local) {

this.time_local = time_local;

}

public String getRequest() {

return request;

}

public void setRequest(String request) {

this.request = request;

}

public String getStatus() {

return status;

}

public void setStatus(String status) {

this.status = status;

}

public String getBody_bytes_sent() {

return body_bytes_sent;

}

public void setBody_bytes_sent(String body_bytes_sent) {

this.body_bytes_sent = body_bytes_sent;

}

public String getHttp_referer() {

return http_referer;

}

public void setHttp_referer(String http_referer) {

this.http_referer = http_referer;

}

public String getHttp_user_agent() {

return http_user_agent;

}

public void setHttp_user_agent(String http_user_agent) {

this.http_user_agent = http_user_agent;

}

@Override

public String toString() {

return "Kpi [remote_addr=" + remote_addr + ", remote_user="

+ remote_user + ", time_local=" + time_local + ", request="

+ request + ", status=" + status + ", body_bytes_sent="

+ body_bytes_sent + ", http_referer=" + http_referer

+ ", http_user_agent=" + http_user_agent + ", method=" + method

+ ", http_version=" + http_version + "]";

}

}5、kpi的工具類

package org.aaa.kpi;

public class KpiUtil {

/***

* line記錄轉化成kpi對象

* @param line 日志的一條記錄

* @author tianbx

* */

public static Kpi transformLineKpi(String line){

String[] elementList = line.split(" ");

Kpi kpi = new Kpi();

kpi.setRemote_addr(elementList[0]);

kpi.setRemote_user(elementList[1]);

kpi.setTime_local(elementList[3].substring(1));

kpi.setMethod(elementList[5].substring(1));

kpi.setRequest(elementList[6]);

kpi.setHttp_version(elementList[7]);

kpi.setStatus(elementList[8]);

kpi.setBody_bytes_sent(elementList[9]);

kpi.setHttp_referer(elementList[10]);

kpi.setHttp_user_agent(elementList[11] + " " + elementList[12]);

return kpi;

}

}6、算法模型: 并行算法

Browser: 用戶的訪問設備統計

– Map: {key:$http_user_agent,value:1}

– Reduce: {key:$http_user_agent,value:求和(sum)}

7、map-reduce分析代碼

import java.io.IOException;

import java.util.Iterator;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.FileInputFormat;

import org.apache.hadoop.mapred.FileOutputFormat;

import org.apache.hadoop.mapred.JobClient;

import org.apache.hadoop.mapred.JobConf;

import org.apache.hadoop.mapred.MapReduceBase;

import org.apache.hadoop.mapred.Mapper;

import org.apache.hadoop.mapred.OutputCollector;

import org.apache.hadoop.mapred.Reducer;

import org.apache.hadoop.mapred.Reporter;

import org.apache.hadoop.mapred.TextInputFormat;

import org.apache.hadoop.mapred.TextOutputFormat;

import org.hmahout.kpi.entity.Kpi;

import org.hmahout.kpi.util.KpiUtil;

import cz.mallat.uasparser.UASparser;

import cz.mallat.uasparser.UserAgentInfo;

public class KpiBrowserSimpleV {

public static class KpiBrowserSimpleMapper extends MapReduceBase

implements Mapper<Object, Text, Text, IntWritable> {

UASparser parser = null;

@Override

public void map(Object key, Text value,

OutputCollector<Text, IntWritable> out, Reporter reporter)

throws IOException {

Kpi kpi = KpiUtil.transformLineKpi(value.toString());

if(kpi!=null && kpi.getHttP_user_agent_info()!=null){

if(parser==null){

parser = new UASparser();

}

UserAgentInfo info =

parser.parseBrowserOnly(kpi.getHttP_user_agent_info());

if("unknown".equals(info.getUaName())){

out.collect(new Text(info.getUaName()), new IntWritable(1));

}else{

out.collect(new Text(info.getUaFamily()), new IntWritable(1));

}

}

}

}

public static class KpiBrowserSimpleReducer extends MapReduceBase implements

Reducer<Text, IntWritable, Text, IntWritable>{

@Override

public void reduce(Text key, Iterator<IntWritable> value,

OutputCollector<Text, IntWritable> out, Reporter reporter)

throws IOException {

IntWritable sum = new IntWritable(0);

while(value.hasNext()){

sum.set(sum.get()+value.next().get());

}

out.collect(key, sum);

}

}

public static void main(String[] args) throws IOException {

String input = "hdfs://127.0.0.1:9000/user/tianbx/log_kpi/input";

String output ="hdfs://127.0.0.1:9000/user/tianbx/log_kpi/browerSimpleV";

JobConf conf = new JobConf(KpiBrowserSimpleV.class);

conf.setJobName("KpiBrowserSimpleV");

String url = "classpath:";

conf.addResource(url+"/hadoop/core-site.xml");

conf.addResource(url+"/hadoop/hdfs-site.xml");

conf.addResource(url+"/hadoop/mapred-site.xml");

conf.setMapOutputKeyClass(Text.class);

conf.setMapOutputValueClass(IntWritable.class);

conf.setOutputKeyClass(Text.class);

conf.setOutputValueClass(IntWritable.class);

conf.setMapperClass(KpiBrowserSimpleMapper.class);

conf.setCombinerClass(KpiBrowserSimpleReducer.class);

conf.setReducerClass(KpiBrowserSimpleReducer.class);

conf.setInputFormat(TextInputFormat.class);

conf.setOutputFormat(TextOutputFormat.class);

FileInputFormat.setInputPaths(conf, new Path(input));

FileOutputFormat.setOutputPath(conf, new Path(output));

JobClient.runJob(conf);

System.exit(0);

}

}

8、輸出文件log_kpi/browerSimpleV內容

AOL Explorer 1

Android Webkit 123

Chrome 4867

CoolNovo 23

Firefox 1700

Google App Engine 5

IE 1521

Jakarta Commons-HttpClient 3

Maxthon 27

Mobile Safari 273

Mozilla 130

Openwave Mobile Browser 2

Opera 2

Pale Moon 1

Python-urllib 4

Safari 246

Sogou Explorer 157

unknown 4685

8 R制作圖片

data<-read.table(file="borwer.txt",header=FALSE,sep=",")

names(data)<-c("borwer","num")

qplot(borwer,num,data=data,geom="bar")

感謝各位的閱讀,以上就是“hadoop網站日志舉例分析”的內容了,經過本文的學習后,相信大家對hadoop網站日志舉例分析這一問題有了更深刻的體會,具體使用情況還需要大家實踐驗證。這里是億速云,小編將為大家推送更多相關知識點的文章,歡迎關注!

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。