您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

本篇文章給大家分享的是有關深入淺析MongoDB中的分片集群,小編覺得挺實用的,因此分享給大家學習,希望大家閱讀完這篇文章后可以有所收獲,話不多說,跟著小編一起來看看吧。

1、什么是分片?為什么要分片?

我們知道數據庫服務器一般出現瓶頸是在磁盤io上,或者高并發網絡io,又或者單臺server的cpu、內存等等一系列原因;于是,為了解決這些瓶頸問題,我們就必須擴展服務器性能;通常擴展服務器有向上擴展和向外擴展;所謂向上擴展就是給服務器加更大的磁盤,使用更大更好的內存,更換更好的cpu;這種擴展在一定程度上是可以解決性能瓶頸問題,但隨著數據量大增大,瓶頸會再次出現;所以通常這種向上擴展的方式不推薦;向外擴展是指一臺服務器不夠加兩臺,兩臺不夠加三臺,以這種方式擴展,只要出現瓶頸我們就可以使用增加服務器來解決;這樣一來服務器性能解決了,但用戶的讀寫怎么分散到多個服務器上去呢?所以我們還要想辦法把數據切分成多塊,讓每個服務器只保存整個數據集的部分數據,這樣一來使得原來一個很大的數據集就通過切片的方式,把它切分成多分,分散的存放在多個服務器上,這就是分片;分片是可以有效解決用戶寫操作性能瓶頸;雖然解決了服務器性能問題和用戶寫性能問題,同時也帶來了一個新問題,就是用戶的查詢;我們把整個數據集分散到多個server上,那么用戶怎么查詢數據呢?比如用戶要查詢年齡大于30的用戶,該怎么查詢呢?而年齡大于30的用戶的數據,可能server1上有一部分數據,server2上有部分數據,我們怎么才能夠把所有滿足條件的數據全部查詢到呢?這個場景有點類似我們之前說的mogilefs的架構,用戶上傳圖片到mogilefs首先要把圖片的元數據寫進tracker,然后在把數據存放在對應的data節點,這樣一來用戶來查詢,首先找tracker節點,tracker會把用戶的請求文件的元數據告訴客戶端,然后客戶端在到對應的data節點取數據,最后拼湊成一張圖片;而在mongodb上也是很類似,不同的的是在mogilefs上,客戶端需要自己去和后端的data節點交互,取出數據;在mongdb上客戶端不需要直接和后端的data節點交互,而是通過mongodb專有的客戶端代理去代客戶端交互,最后把數據統一由代理返回給客戶端;這樣一來就可以解決用戶的查詢問題;簡單講所謂分片就是把一個大的數據集通過切分的方式切分成多分,分散的存放在多個服務器上;分片的目的是為了解決數據量過大而導致的性能問題;

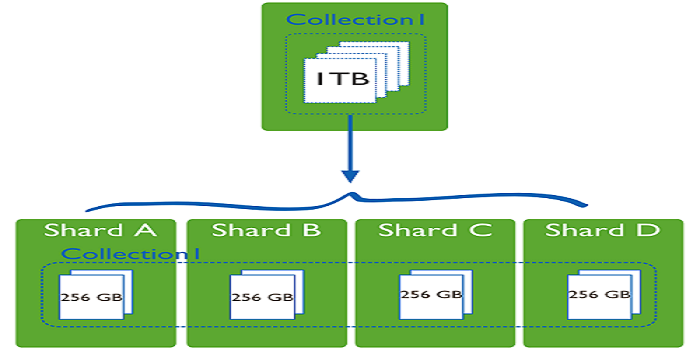

2、數據集分片示意圖

提示:我們通過分片,可以將原本1T的數據集,平均分成4分,每個節點存儲原有數據集的1/4,使得原來用一臺服務器處理1T的數據,現在可以用4臺服務器來處理,這樣一來就有效的提高了數據處理過程;這也是分布式系統的意義;在mongodb中我們把這種共同處理一個數據集的部分數據的節點叫shard,我們把使用這種分片機制的mongodb集群就叫做mongodb分片集群;

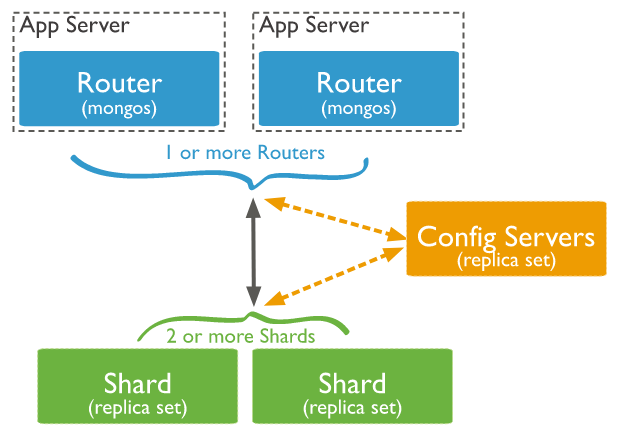

3、mongodb分片集群架構

提示:在mongodb分片集群中,通常有三類角色,第一類是router角色,router角色主要用來接收客戶端的讀寫請求,主要運行mongos這個服務;為了使得router角色的高可用,通常會用多個節點來組成router高可用集群;第二類是config server,這類角色主要用來保存mongodb分片集群中的數據和集群的元數據信息,有點類似mogilefs中的tracker的作用;為了保證config server的高可用性,通常config server也會將其運行為一個副本集;第三類是shard角色,這類角色主要用來存放數據,類似mogilefs的數據節點,為了保證數據的高可用和完整性,通常每個shard是一個副本集;

4、mongodb分片集群工作過程

首先用戶將請求發送給router,router接收到用戶請求,然后去找config server拿對應請求的元數據信息,router拿到元數據信息后,然后再向對應的shard請求數據,最后將數據整合后響應給用戶;在這個過程中router 就相當于mongodb的一個客戶端代理;而config server用來存放數據的元數據信息,這些信息主要包含了那些shard上存放了那些數據,對應的那些數據存放在那些shard上,和mogilefs上的tracker非常類似,主要存放了兩張表,一個是以數據為中心的一張表,一個是以shard節點為中心的一張表;

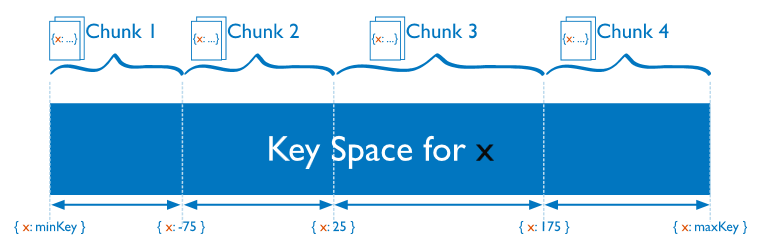

5、mongodb是怎么分片的?

在mongodb的分片集群中,分片是按照collection字段來分的,我們把指定的字段叫shard key;根據shard key的取值不同和應用場景,我們可以基于shard key取值范圍來分片,也可以基于shard key做hash分片;分好片以后將結果保存在config server上;在configserver 上保存了每一個分片對應的數據集;比如我們基于shardkey的范圍來分片,在configserver上就記錄了一個連續范圍的shardkey的值都保存在一個分片上;如下圖

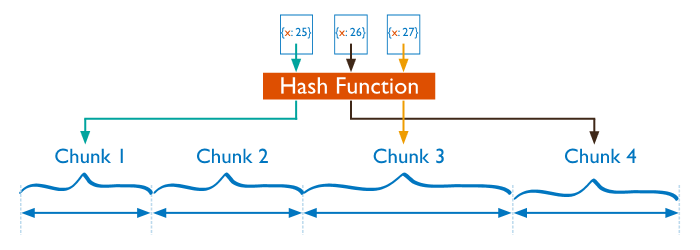

上圖主要描述了基于范圍的分片,從shardkey最小值到最大值進行分片,把最小值到-75這個范圍值的數據塊保存在第一個分片上,把-75到25這個范圍值的數據塊保存在第二個分片上,依次類推;這種基于范圍的分片,很容易導致某個分片上的數據過大,而有的分片上的數據又很小,造成分片數據不均勻;所以除了基與shard key的值的范圍分片,也可以基于shard key的值做hash分片,如下圖

基于hash分片,主要是對shardkey做hash計算后,然后根據最后的結果落在哪個分片上就把對應的數據塊保存在對應的分片上;比如我們把shandkey做hash計算,然后對分片數量進行取模計算,如果得到的結果是0,那么就把對應的數據塊保存在第一個分片上,如果取得到結果是1就保存在第二個分片上依次類推;這種基于hash分片,就有效的降低分片數據不均衡的情況,因為hash計算的值是散列的;

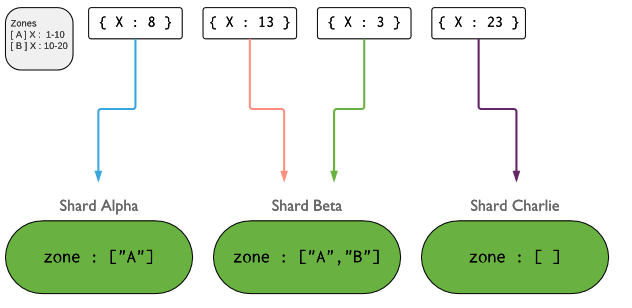

除了上述兩種切片的方式以外,我們還可以根據區域切片,也叫基于列表切片,如下圖

上圖主要描述了基于區域分片,這種分片一般是針對shardkey的取值范圍不是一個順序的集合,而是一個離散的集合,比如我們可用這種方式對全國省份這個字段做切片,把流量特別大的省份單獨切一個片,把流量小的幾個省份組合切分一片,把國外的訪問或不是國內省份的切分為一片;這種切片有點類似給shardkey做分類;不管用什么方式去做分片,我們盡可能的遵循寫操作要越分散越好,讀操作要越集中越好;

6、mongodb分片集群搭建

環境說明

| 主機名 | 角色 | ip地址 |

| node01 | router | 192.168.0.41 |

| node02/node03/node04 | config server replication set | 192.168.0.42 192.168.0.43 192.168.0.44 |

| node05/node06/node07 | shard1 replication set | 192.168.0.45 192.168.0.46 192.168.0.47 |

| node08/node09/node10 | shard2 replication set | 192.168.0.48 192.168.0.49 192.168.0.50 |

基礎環境,各server做時間同步,關閉防火墻,關閉selinux,ssh互信,主機名解析

主機名解析

[root@node01 ~]# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.0.99 time.test.org time-node 192.168.0.41 node01.test.org node01 192.168.0.42 node02.test.org node02 192.168.0.43 node03.test.org node03 192.168.0.44 node04.test.org node04 192.168.0.45 node05.test.org node05 192.168.0.46 node06.test.org node06 192.168.0.47 node07.test.org node07 192.168.0.48 node08.test.org node08 192.168.0.49 node09.test.org node09 192.168.0.50 node10.test.org node10 192.168.0.51 node11.test.org node11 192.168.0.52 node12.test.org node12 [root@node01 ~]#

準備好基礎環境以后,配置mongodb yum源

[root@node01 ~]# cat /etc/yum.repos.d/mongodb.repo [mongodb-org] name = MongoDB Repository baseurl = https://mirrors.aliyun.com/mongodb/yum/redhat/7/mongodb-org/4.4/x86_64/ gpgcheck = 1 enabled = 1 gpgkey = https://www.mongodb.org/static/pgp/server-4.4.asc [root@node01 ~]#

將mongodb yum源復制給其他節點

[root@node01 ~]# for i in {02..10} ; do scp /etc/yum.repos.d/mongodb.repo node$i:/etc/yum.repos.d/; done

mongodb.repo 100% 206 247.2KB/s 00:00

mongodb.repo 100% 206 222.3KB/s 00:00

mongodb.repo 100% 206 118.7KB/s 00:00

mongodb.repo 100% 206 164.0KB/s 00:00

mongodb.repo 100% 206 145.2KB/s 00:00

mongodb.repo 100% 206 119.9KB/s 00:00

mongodb.repo 100% 206 219.2KB/s 00:00

mongodb.repo 100% 206 302.1KB/s 00:00

mongodb.repo 100% 206 289.3KB/s 00:00

[root@node01 ~]#在每個節點上安裝mongodb-org這個包

for i in {01..10} ;

do ssh node$i ' yum -y install mongodb-org ';

done在config server 和shard節點上創建數據目錄和日志目錄,并將其屬主和屬組更改為mongod

[root@node01 ~]# for i in {02..10} ; do ssh node$i 'mkdir -p /mongodb/{data,log} && chown -R mongod.mongod /mongodb/ && ls -ld /mongodb'; done

drwxr-xr-x 4 mongod mongod 29 Nov 11 22:47 /mongodb

drwxr-xr-x 4 mongod mongod 29 Nov 11 22:45 /mongodb

drwxr-xr-x 4 mongod mongod 29 Nov 11 22:45 /mongodb

drwxr-xr-x 4 mongod mongod 29 Nov 11 22:45 /mongodb

drwxr-xr-x 4 mongod mongod 29 Nov 11 22:45 /mongodb

drwxr-xr-x 4 mongod mongod 29 Nov 11 22:45 /mongodb

drwxr-xr-x 4 mongod mongod 29 Nov 11 22:45 /mongodb

drwxr-xr-x 4 mongod mongod 29 Nov 11 22:45 /mongodb

drwxr-xr-x 4 mongod mongod 29 Nov 11 22:45 /mongodb

[root@node01 ~]#配置shard1 replication set

[root@node05 ~]# cat /etc/mongod.conf systemLog: destination: file logAppend: true path: /mongodb/log/mongod.log storage: dbPath: /mongodb/data/ journal: enabled: true processManagement: fork: true pidFilePath: /var/run/mongodb/mongod.pid timeZoneInfo: /usr/share/zoneinfo net: bindIp: 0.0.0.0 sharding: clusterRole: shardsvr replication: replSetName: shard1_replset [root@node05 ~]# scp /etc/mongod.conf node06:/etc/ mongod.conf 100% 360 394.5KB/s 00:00 [root@node05 ~]# scp /etc/mongod.conf node07:/etc/ mongod.conf 100% 360 351.7KB/s 00:00 [root@node05 ~]#

配置shard2 replication set

[root@node08 ~]# cat /etc/mongod.conf systemLog: destination: file logAppend: true path: /mongodb/log/mongod.log storage: dbPath: /mongodb/data/ journal: enabled: true processManagement: fork: true pidFilePath: /var/run/mongodb/mongod.pid timeZoneInfo: /usr/share/zoneinfo net: bindIp: 0.0.0.0 sharding: clusterRole: shardsvr replication: replSetName: shard2_replset [root@node08 ~]# scp /etc/mongod.conf node09:/etc/ mongod.conf 100% 360 330.9KB/s 00:00 [root@node08 ~]# scp /etc/mongod.conf node10:/etc/ mongod.conf 100% 360 385.9KB/s 00:00 [root@node08 ~]#

啟動shard1 replication set和shard2 replication set

[root@node05 ~]# systemctl start mongod.service

[root@node05 ~]# ss -tnl

State Recv-Q Send-Q Local Address:Port Peer Address:Port

LISTEN 0 128 *:22 *:*

LISTEN 0 100 127.0.0.1:25 *:*

LISTEN 0 128 *:27018 *:*

LISTEN 0 128 :::22 :::*

LISTEN 0 100 ::1:25 :::*

[root@node05 ~]#for i in {06..10} ; do ssh node$i 'systemctl start mongod.service && ss -tnl';done

State Recv-Q Send-Q Local Address:Port Peer Address:Port

LISTEN 0 128 *:22 *:*

LISTEN 0 100 127.0.0.1:25 *:*

LISTEN 0 128 *:27018 *:*

LISTEN 0 128 :::22 :::*

LISTEN 0 100 ::1:25 :::*

State Recv-Q Send-Q Local Address:Port Peer Address:Port

LISTEN 0 128 *:22 *:*

LISTEN 0 100 127.0.0.1:25 *:*

LISTEN 0 128 *:27018 *:*

LISTEN 0 128 :::22 :::*

LISTEN 0 100 ::1:25 :::*

State Recv-Q Send-Q Local Address:Port Peer Address:Port

LISTEN 0 128 *:22 *:*

LISTEN 0 100 127.0.0.1:25 *:*

LISTEN 0 128 *:27018 *:*

LISTEN 0 128 :::22 :::*

LISTEN 0 100 ::1:25 :::*

State Recv-Q Send-Q Local Address:Port Peer Address:Port

LISTEN 0 128 *:22 *:*

LISTEN 0 100 127.0.0.1:25 *:*

LISTEN 0 128 *:27018 *:*

LISTEN 0 128 :::22 :::*

LISTEN 0 100 ::1:25 :::*

State Recv-Q Send-Q Local Address:Port Peer Address:Port

LISTEN 0 128 *:22 *:*

LISTEN 0 100 127.0.0.1:25 *:*

LISTEN 0 128 *:27018 *:*

LISTEN 0 128 :::22 :::*

LISTEN 0 100 ::1:25 :::*

[root@node05 ~]#提示:默認不指定shard監聽端口,它默認就監聽在27018端口,所以啟動shard節點后,請確保27018端口正常監聽即可;

連接node05的mongodb 初始化shard1_replset副本集

> rs.initiate(

... {

... _id : "shard1_replset",

... members: [

... { _id : 0, host : "node05:27018" },

... { _id : 1, host : "node06:27018" },

... { _id : 2, host : "node07:27018" }

... ]

... }

... )

{

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1605107401, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1605107401, 1)

}

shard1_replset:SECONDARY>連接node08的mongodb 初始化shard2_replset副本集

> rs.initiate(

... {

... _id : "shard2_replset",

... members: [

... { _id : 0, host : "node08:27018" },

... { _id : 1, host : "node09:27018" },

... { _id : 2, host : "node10:27018" }

... ]

... }

... )

{

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1605107644, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1605107644, 1)

}

shard2_replset:OTHER>配置configserver replication set

[root@node02 ~]# cat /etc/mongod.conf systemLog: destination: file logAppend: true path: /mongodb/log/mongod.log storage: dbPath: /mongodb/data/ journal: enabled: true processManagement: fork: true pidFilePath: /var/run/mongodb/mongod.pid timeZoneInfo: /usr/share/zoneinfo net: bindIp: 0.0.0.0 sharding: clusterRole: configsvr replication: replSetName: cfg_replset [root@node02 ~]# scp /etc/mongod.conf node03:/etc/mongod.conf mongod.conf 100% 358 398.9KB/s 00:00 [root@node02 ~]# scp /etc/mongod.conf node04:/etc/mongod.conf mongod.conf 100% 358 270.7KB/s 00:00 [root@node02 ~]#

啟動config server

[root@node02 ~]# systemctl start mongod.service [root@node02 ~]# ss -tnl State Recv-Q Send-Q Local Address:Port Peer Address:Port LISTEN 0 128 *:27019 *:* LISTEN 0 128 *:22 *:* LISTEN 0 100 127.0.0.1:25 *:* LISTEN 0 128 :::22 :::* LISTEN 0 100 ::1:25 :::* [root@node02 ~]# ssh node03 'systemctl start mongod.service && ss -tnl' State Recv-Q Send-Q Local Address:Port Peer Address:Port LISTEN 0 128 *:27019 *:* LISTEN 0 128 *:22 *:* LISTEN 0 100 127.0.0.1:25 *:* LISTEN 0 128 :::22 :::* LISTEN 0 100 ::1:25 :::* [root@node02 ~]# ssh node04 'systemctl start mongod.service && ss -tnl' State Recv-Q Send-Q Local Address:Port Peer Address:Port LISTEN 0 128 *:27019 *:* LISTEN 0 128 *:22 *:* LISTEN 0 100 127.0.0.1:25 *:* LISTEN 0 128 :::22 :::* LISTEN 0 100 ::1:25 :::* [root@node02 ~]#

提示:config server 默認在不指定端口的情況監聽在27019這個端口,啟動后,請確保該端口處于正常監聽;

連接node02的mongodb,初始化cfg_replset 副本集

> rs.initiate(

... {

... _id: "cfg_replset",

... configsvr: true,

... members: [

... { _id : 0, host : "node02:27019" },

... { _id : 1, host : "node03:27019" },

... { _id : 2, host : "node04:27019" }

... ]

... }

... )

{

"ok" : 1,

"$gleStats" : {

"lastOpTime" : Timestamp(1605108177, 1),

"electionId" : ObjectId("000000000000000000000000")

},

"lastCommittedOpTime" : Timestamp(0, 0),

"$clusterTime" : {

"clusterTime" : Timestamp(1605108177, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

},

"operationTime" : Timestamp(1605108177, 1)

}

cfg_replset:SECONDARY>配置router

[root@node01 ~]# cat /etc/mongos.conf systemLog: destination: file path: /var/log/mongodb/mongos.log logAppend: true processManagement: fork: true net: bindIp: 0.0.0.0 sharding: configDB: "cfg_replset/node02:27019,node03:27019,node04:27019" [root@node01 ~]#

提示:configDB必須是副本集名稱/成員監聽地址:port的形式,成員至少要寫一個;

啟動router

[root@node01 ~]# mongos -f /etc/mongos.conf about to fork child process, waiting until server is ready for connections. forked process: 1510 child process started successfully, parent exiting [root@node01 ~]# ss -tnl State Recv-Q Send-Q Local Address:Port Peer Address:Port LISTEN 0 128 *:22 *:* LISTEN 0 100 127.0.0.1:25 *:* LISTEN 0 128 *:27017 *:* LISTEN 0 128 :::22 :::* LISTEN 0 100 ::1:25 :::* [root@node01 ~]#

連接mongos,添加shard1 replication set 和shard2 replication set

mongos> sh.addShard("shard1_replset/node05:27018,node06:27018,node07:27018")

{

"shardAdded" : "shard1_replset",

"ok" : 1,

"operationTime" : Timestamp(1605109085, 3),

"$clusterTime" : {

"clusterTime" : Timestamp(1605109086, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

mongos> sh.addShard("shard2_replset/node08:27018,node09:27018,node10:27018")

{

"shardAdded" : "shard2_replset",

"ok" : 1,

"operationTime" : Timestamp(1605109118, 2),

"$clusterTime" : {

"clusterTime" : Timestamp(1605109118, 3),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

mongos>提示:添加shard 副本集也是需要指明副本集名稱/成員的格式添加;

到此分片集群就配置好了

查看sharding 集群狀態

mongos> sh.status()

--- Sharding Status ---

sharding version: {

"_id" : 1,

"minCompatibleVersion" : 5,

"currentVersion" : 6,

"clusterId" : ObjectId("5fac01dd8d6fa3fe899662c8")

}

shards:

{ "_id" : "shard1_replset", "host" : "shard1_replset/node05:27018,node06:27018,node07:27018", "state" : 1 }

{ "_id" : "shard2_replset", "host" : "shard2_replset/node08:27018,node09:27018,node10:27018", "state" : 1 }

active mongoses:

"4.4.1" : 1

autosplit:

Currently enabled: yes

balancer:

Currently enabled: yes

Currently running: yes

Collections with active migrations:

config.system.sessions started at Wed Nov 11 2020 23:43:14 GMT+0800 (CST)

Failed balancer rounds in last 5 attempts: 0

Migration Results for the last 24 hours:

45 : Success

databases:

{ "_id" : "config", "primary" : "config", "partitioned" : true }

config.system.sessions

shard key: { "_id" : 1 }

unique: false

balancing: true

chunks:

shard1_replset 978

shard2_replset 46

too many chunks to print, use verbose if you want to force print

mongos>提示:可以看到當前分片集群中有兩個shard 副本集,分別是shard1_replset和shard2_replset;以及一個config server

對testdb數據庫啟用sharding功能

mongos> sh.enableSharding("testdb")

{

"ok" : 1,

"operationTime" : Timestamp(1605109993, 9),

"$clusterTime" : {

"clusterTime" : Timestamp(1605109993, 9),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

mongos> sh.status()

--- Sharding Status ---

sharding version: {

"_id" : 1,

"minCompatibleVersion" : 5,

"currentVersion" : 6,

"clusterId" : ObjectId("5fac01dd8d6fa3fe899662c8")

}

shards:

{ "_id" : "shard1_replset", "host" : "shard1_replset/node05:27018,node06:27018,node07:27018", "state" : 1 }

{ "_id" : "shard2_replset", "host" : "shard2_replset/node08:27018,node09:27018,node10:27018", "state" : 1 }

active mongoses:

"4.4.1" : 1

autosplit:

Currently enabled: yes

balancer:

Currently enabled: yes

Currently running: no

Failed balancer rounds in last 5 attempts: 0

Migration Results for the last 24 hours:

214 : Success

databases:

{ "_id" : "config", "primary" : "config", "partitioned" : true }

config.system.sessions

shard key: { "_id" : 1 }

unique: false

balancing: true

chunks:

shard1_replset 810

shard2_replset 214

too many chunks to print, use verbose if you want to force print

{ "_id" : "testdb", "primary" : "shard2_replset", "partitioned" : true, "version" : { "uuid" : UUID("454aad2e-b397-4c88-b5c4-c3b21d37e480"), "lastMod" : 1 } }

mongos>提示:在對某個數據庫啟動sharding功能后,它會給我們分片一個主shard所謂主shard是用來存放該數據庫下沒有做分片的colleciton;對于分片的collection會分散在各個shard上;

啟用對testdb庫下的peoples集合啟動sharding,并指明在age字段上做基于范圍的分片

mongos> sh.shardCollection("testdb.peoples",{"age":1})

{

"collectionsharded" : "testdb.peoples",

"collectionUUID" : UUID("ec095411-240d-4484-b45d-b541c33c3975"),

"ok" : 1,

"operationTime" : Timestamp(1605110694, 11),

"$clusterTime" : {

"clusterTime" : Timestamp(1605110694, 11),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

mongos> sh.status()

--- Sharding Status ---

sharding version: {

"_id" : 1,

"minCompatibleVersion" : 5,

"currentVersion" : 6,

"clusterId" : ObjectId("5fac01dd8d6fa3fe899662c8")

}

shards:

{ "_id" : "shard1_replset", "host" : "shard1_replset/node05:27018,node06:27018,node07:27018", "state" : 1 }

{ "_id" : "shard2_replset", "host" : "shard2_replset/node08:27018,node09:27018,node10:27018", "state" : 1 }

active mongoses:

"4.4.1" : 1

autosplit:

Currently enabled: yes

balancer:

Currently enabled: yes

Currently running: no

Failed balancer rounds in last 5 attempts: 0

Migration Results for the last 24 hours:

408 : Success

databases:

{ "_id" : "config", "primary" : "config", "partitioned" : true }

config.system.sessions

shard key: { "_id" : 1 }

unique: false

balancing: true

chunks:

shard1_replset 616

shard2_replset 408

too many chunks to print, use verbose if you want to force print

{ "_id" : "testdb", "primary" : "shard2_replset", "partitioned" : true, "version" : { "uuid" : UUID("454aad2e-b397-4c88-b5c4-c3b21d37e480"), "lastMod" : 1 } }

testdb.peoples

shard key: { "age" : 1 }

unique: false

balancing: true

chunks:

shard2_replset 1

{ "age" : { "$minKey" : 1 } } -->> { "age" : { "$maxKey" : 1 } } on : shard2_replset Timestamp(1, 0)

mongos>提示:如果對應的collection存在,我們還需要先對collection創建shardkey索引,然后在使用sh.shardCollection()來對colleciton啟用sharding功能;基于范圍做分片,我們可以在多個字段上做;

基于hash做分片

mongos> sh.shardCollection("testdb.peoples1",{"name":"hashed"})

{

"collectionsharded" : "testdb.peoples1",

"collectionUUID" : UUID("f6213da1-7c7d-4d5e-8fb1-fc554efb9df2"),

"ok" : 1,

"operationTime" : Timestamp(1605111014, 2),

"$clusterTime" : {

"clusterTime" : Timestamp(1605111014, 2),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

mongos> sh.status()

--- Sharding Status ---

sharding version: {

"_id" : 1,

"minCompatibleVersion" : 5,

"currentVersion" : 6,

"clusterId" : ObjectId("5fac01dd8d6fa3fe899662c8")

}

shards:

{ "_id" : "shard1_replset", "host" : "shard1_replset/node05:27018,node06:27018,node07:27018", "state" : 1 }

{ "_id" : "shard2_replset", "host" : "shard2_replset/node08:27018,node09:27018,node10:27018", "state" : 1 }

active mongoses:

"4.4.1" : 1

autosplit:

Currently enabled: yes

balancer:

Currently enabled: yes

Currently running: yes

Collections with active migrations:

config.system.sessions started at Thu Nov 12 2020 00:10:16 GMT+0800 (CST)

Failed balancer rounds in last 5 attempts: 0

Migration Results for the last 24 hours:

480 : Success

databases:

{ "_id" : "config", "primary" : "config", "partitioned" : true }

config.system.sessions

shard key: { "_id" : 1 }

unique: false

balancing: true

chunks:

shard1_replset 543

shard2_replset 481

too many chunks to print, use verbose if you want to force print

{ "_id" : "testdb", "primary" : "shard2_replset", "partitioned" : true, "version" : { "uuid" : UUID("454aad2e-b397-4c88-b5c4-c3b21d37e480"), "lastMod" : 1 } }

testdb.peoples

shard key: { "age" : 1 }

unique: false

balancing: true

chunks:

shard2_replset 1

{ "age" : { "$minKey" : 1 } } -->> { "age" : { "$maxKey" : 1 } } on : shard2_replset Timestamp(1, 0)

testdb.peoples1

shard key: { "name" : "hashed" }

unique: false

balancing: true

chunks:

shard1_replset 2

shard2_replset 2

{ "name" : { "$minKey" : 1 } } -->> { "name" : NumberLong("-4611686018427387902") } on : shard1_replset Timestamp(1, 0)

{ "name" : NumberLong("-4611686018427387902") } -->> { "name" : NumberLong(0) } on : shard1_replset Timestamp(1, 1)

{ "name" : NumberLong(0) } -->> { "name" : NumberLong("4611686018427387902") } on : shard2_replset Timestamp(1, 2)

{ "name" : NumberLong("4611686018427387902") } -->> { "name" : { "$maxKey" : 1 } } on : shard2_replset Timestamp(1, 3)

mongos>提示:基于hash做分片只能在一個字段上做,不能指定多個字段;從上面的狀態信息可以看到testdb.peoples被分到了shard2上,peoples1一部分分到了shard1,一部分分到了shard2上;所以在peoples中插入多少條數據,它都會寫到shard2上,在peoples1中插入數據會被寫入到shard1和shard2上;

驗證:在peoples1 集合上插入數據,看看是否將數據分片到不同的shard上呢?

在mongos上插入數據

mongos> use testdb

switched to db testdb

mongos> for (i=1;i<=10000;i++) db.peoples1.insert({name:"people"+i,age:(i%120),classes:(i%20)})

WriteResult({ "nInserted" : 1 })

mongos>在shard1上查看數據

shard1_replset:PRIMARY> show dbs admin 0.000GB config 0.001GB local 0.001GB testdb 0.000GB shard1_replset:PRIMARY> use testdb switched to db testdb shard1_replset:PRIMARY> show tables peoples1 shard1_replset:PRIMARY> db.peoples1.find().count() 4966 shard1_replset:PRIMARY>

提示:在shard1上可以看到對應collection保存了4966條數據;

在shard2上查看數據

shard2_replset:PRIMARY> show dbs admin 0.000GB config 0.001GB local 0.011GB testdb 0.011GB shard2_replset:PRIMARY> use testdb switched to db testdb shard2_replset:PRIMARY> show tables peoples peoples1 shard2_replset:PRIMARY> db.peoples1.find().count() 5034 shard2_replset:PRIMARY>

提示:在shard2上可以看到有peoples集合和peoples1集合,其中peoples1集合保存了5034條數據;shard1和shard2總共就保存了我們剛才插入的10000條數據;

ok,到此mongodb的分片集群就搭建,測試完畢了;

以上就是深入淺析MongoDB中的分片集群,小編相信有部分知識點可能是我們日常工作會見到或用到的。希望你能通過這篇文章學到更多知識。更多詳情敬請關注億速云行業資訊頻道。

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。