您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

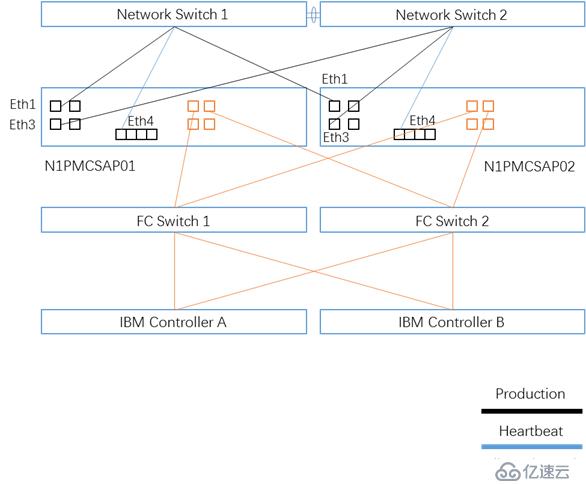

基礎環境準備環境拓撲圖 |

Linux基本服務設定 |

關閉iptables

#/etc/init.d/iptables stop

#chkconfig iptables off

#chkconfig list | grep iptables

關閉selinux

#setenforce 0

#vi /etc/selinux/config

# This file controls the state of SELinux on the system.

# SELINUX= can take one of these three values:

# enforcing - SELinux security policy is enforced.

# permissive - SELinux prints warnings instead of enforcing.

# disabled - No SELinux policy is loaded.

SELINUX=disabled

# SELINUXTYPE= can take one of these two values:

# targeted - Only targeted network daemons are protected.

# strict - Full SELinux protection.

SELINUXTYPE=targeted

關閉NetworkManager

#/etc/init.d/NetworkManager stop

#chkconfig NetworkManager off

#chkconfig list | grep NetworkManager

雙機互信 |

# mkdir ~/.ssh

# chmod 700 ~/.ssh

# ssh-keygen -t rsa

enter

enter

enter

# ssh-keygen -t dsa

enter

enter

enter

N1PMCSAP01執行

# cat ~/.ssh/*.pub >> ~/.ssh/authorized_keys

# ssh N1PMCSAP02 cat ~/.ssh/*.pub >> ~/.ssh/authorized_keys

yes

N1PMCSAP02的密碼

# scp ~/.ssh/authorized_keys N1PMCSAP02:~/.ssh/authorized_keys

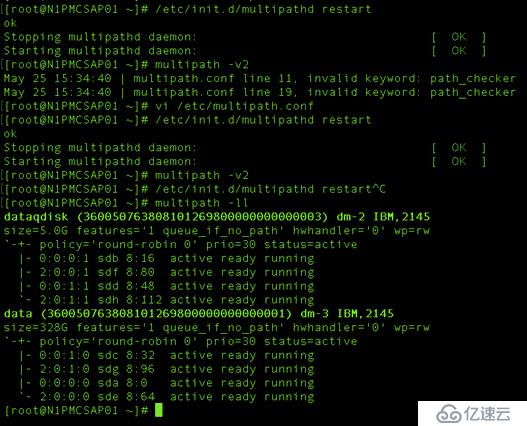

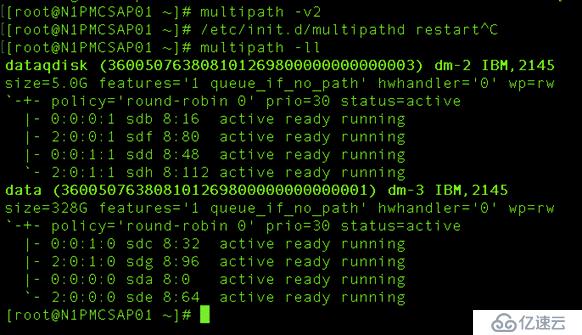

存儲多路徑配置 |

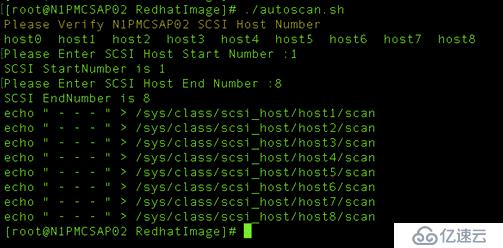

使用autoscan.sh腳本刷新IBM存儲路徑

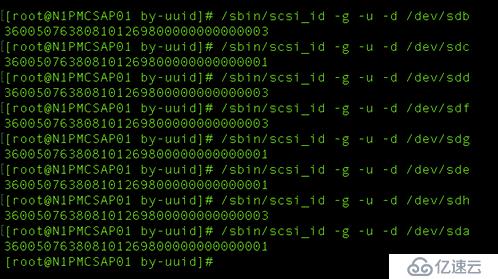

查看存儲底層WWID

NAME | WWID | Capcity | Path |

Dataqdisk | 360050763808101269800000000000003 | 5GB | 4 |

Data | 360050763808101269800000000000001 | 328GB | 4 |

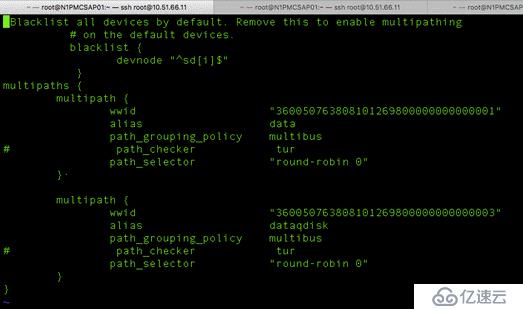

創建多路徑配置文件

#vi /etc/multipath.conf

Blacklist devnode默認為^sd[a]需要修改為^sd[i]本環境Root分區為sdi,屏蔽本地硬盤

配置完畢后,重啟multipathd服務

/etc/init.d/multipathd restart

使用multipath -v2命令刷新存儲路徑

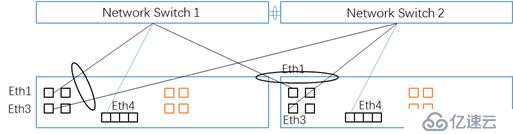

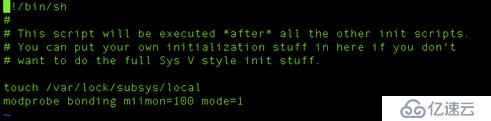

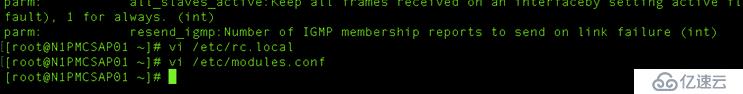

多網卡綁定 |

網卡綁定拓撲圖

修改vi /etc/modules.conf

創建網卡綁定配置文件

N1PMCSAP01 Bond配置

[root@N1PMCSAP01 /]# vi /etc/sysconfig/network-scripts/ifcfg-eth2

DEVICE=eth2

# HWADDR=A0:36:9F:DA:DA:CD

TYPE=Ethernet

UUID=3ca5c4fe-44cd-4c50-b3f1-8082e1c1c94d

ONBOOT=yes

NM_CONTROLLED=no

BOOTPROTO=none

MASTER=bond0

SLAVE=yes

[root@N1PMCSAP01 /]# vi /etc/sysconfig/network-scripts/ifcfg-eth4

DEVICE=eth4

# HWADDR=A0:36:9F:DA:DA:CB

TYPE=Ethernet

UUID=1d47913a-b11c-432c-b70f-479a05da2c71

ONBOOT=yes

NM_CONTROLLED=no

BOOTPROTO=none

MASTER=bond0

SLAVE=yes

[root@N1PMCSAP01 /]# vi /etc/sysconfig/network-scripts/ifcfg-bond0

DEVICE=bond0

# HWADDR=A0:36:9F:DA:DA:CC

TYPE=Ethernet

UUID=a099350a-8dfa-4d3f-b444-a08f9703cdc2

ONBOOT=yes

NM_CONTROLLED=no

BOOTPROTO=satic

IPADDR=10.51.66.11

NETMASK=255.255.248.0

GATEWAY=10.51.71.254

N1PMCSAP02 Bond配置

[root@N1PMCSAP02 ~]# cat /etc/sysconfig/network-scripts/ifcfg-eth2

DEVICE=eth2

# HWADDR=A0:36:9F:DA:DA:D1

TYPE=Ethernet

UUID=8e0abf44-360a-4187-ab65-42859d789f57

ONBOOT=yes

NM_CONTROLLED=no

BOOTPROTO=none

MASTER=bond0

SLAVE=yes

[root@N1PMCSAP02 ~]# cat /etc/sysconfig/network-scripts/ifcfg-eth4

DEVICE=eth4

# HWADDR=A0:36:9F:DA:DA:B1

TYPE=Ethernet

UUID=d300f10b-0474-4229-b3a3-50d95e6056c8

ONBOOT=yes

NM_CONTROLLED=no

BOOTPROTO=none

MASTER=bond0

SLAVE=yes

[root@N1PMCSAP02 ~]# cat /etc/sysconfig/network-scripts/ifcfg-bond0

DEVICE=bond0

# HWADDR=A0:36:9F:DA:DA:D0

TYPE=Ethernet

UUID=2288f4e1-6743-4faa-abfb-e83ec4f9443c

ONBOOT=yes

NM_CONTROLLED=no

BOOTPROTO=static

IPADDR=10.51.66.12

NETMASK=255.255.248.0

GATEWAY=10.51.71.254

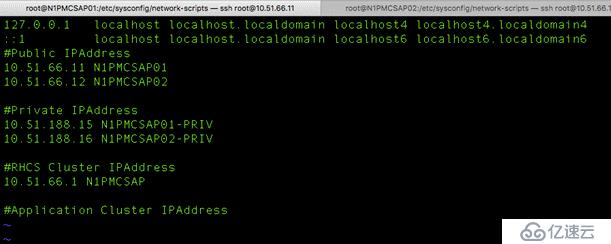

主機Host配置 |

在N1PMCSAP01和N1PMCSAP02中配置hosts文件

#Vi /etc/hosts

RHEL本地源配置 |

more /etc/yum.repos.d/rhel-source.repo

[rhel_6_iso]

name=local iso

baseurl=file:///media

gpgcheck=1

gpgkey=file:///media/RPM-GPG-KEY-redhat-release

[HighAvailability]

name=HighAvailability

baseurl=file:///media/HighAvailability

gpgcheck=1

gpgkey=file:///media/RPM-GPG-KEY-redhat-release

[LoadBalancer]

name=LoadBalancer

baseurl=file:///media/LoadBalancer

gpgcheck=1

gpgkey=file:///media/RPM-GPG-KEY-redhat-release

[ResilientStorage]

name=ResilientStorage

baseurl=file:///media/ResilientStorage

gpgcheck=1

gpgkey=file:///media/RPM-GPG-KEY-redhat-release

[ScalableFilesystem]

name=ScalableFileSystem

baseurl=file:///media/ScalableFileSystem

gpgcheck=1

gpgkey=file:///media/RPM-GPG-KEY-redhat-release

文件系統格式化 |

[root@N1PMCSAP01 /]# pvdisplay

connect() failed on local socket: No such file or directory

Internal cluster locking initialisation failed.

WARNING: Falling back to local file-based locking.

Volume Groups with the clustered attribute will be inaccessible.

--- Physical volume ---

PV Name /dev/sdi2

VG Name VolGroup

PV Size 556.44 GiB / not usable 3.00 MiB

Allocatable yes (but full)

PE Size 4.00 MiB

Total PE 142448

Free PE 0

Allocated PE 142448

PV UUID 0fSZ8Q-Ay1W-ef2n-9ve2-RxzM-t3GV-u4rrQ2

--- Physical volume ---

PV Name /dev/mapper/data

VG Name vg_data

PV Size 328.40 GiB / not usable 1.60 MiB

Allocatable yes (but full)

PE Size 4.00 MiB

Total PE 84070

Free PE 0

Allocated PE 84070

PV UUID kJvd3t-t7V5-MULX-7Kj6-OI2f-vn3r-QXN8tr

[root@N1PMCSAP01 /]# vgdisplay

System ID

Format lvm2

Metadata Areas 1

Metadata Sequence No 4

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 3

Open LV 3

Max PV 0

Cur PV 1

Act PV 1

VG Size 556.44 GiB

PE Size 4.00 MiB

Total PE 142448

Alloc PE / Size 142448 / 556.44 GiB

Free PE / Size 0 / 0

VG UUID 6q2td7-AxWX-4K4K-8vy6-ngRs-IIdP-peMpCU

--- Volume group ---

VG Name vg_data

System ID

Format lvm2

Metadata Areas 1

Metadata Sequence No 3

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 1

Open LV 1

Max PV 0

Cur PV 1

Act PV 1

VG Size 328.40 GiB

PE Size 4.00 MiB

Total PE 84070

Alloc PE / Size 84070 / 328.40 GiB

Free PE / Size 0 / 0

VG UUID GfMy0O-QcmQ-pkt4-zf1i-yKpu-6c2i-JUoSM2

[root@N1PMCSAP01 /]# lvdisplay

--- Logical volume ---

LV Path /dev/vg_data/lv_data

LV Name lv_data

VG Name vg_data

LV UUID 1AMJnu-8UnC-mmGb-7s7N-P0Wg-eeOj-pXrHV6

LV Write Access read/write

LV Creation host, time N1PMCSAP01, 2017-05-26 11:23:04 -0400

LV Status available

# open 1

LV Size 328.40 GiB

Current LE 84070

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:5

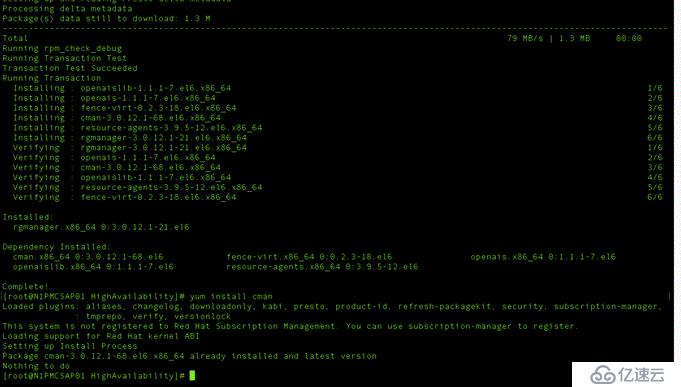

RHCS軟件組件安裝 |

# yum -y install cman ricci rgmanager luci

修改ricci用戶名密碼

#passwd ricci

ricc password:redhat

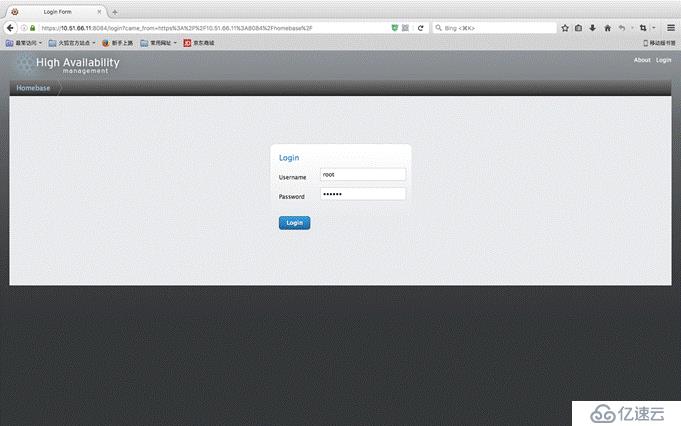

RHCS群集設定LUCI圖形化界面 |

使用瀏覽器登錄https://10.51.56.11:8084/

用戶名root,密碼redhat(默認密碼未修改)

群集創建 |

點擊左上角Manage Cluster創建群集

Node Name | NODE ID | Votes | Ricci user | Ricci password | HOSTNAME |

N1PMCSAP01-PRIV | 1 | 1 | ricci | redhat | N1PMCSAP01-PRIV |

N1PMCSAP02-PRIV | 2 | 1 | ricci | redhat | N1PMCSAP02-PRIV |

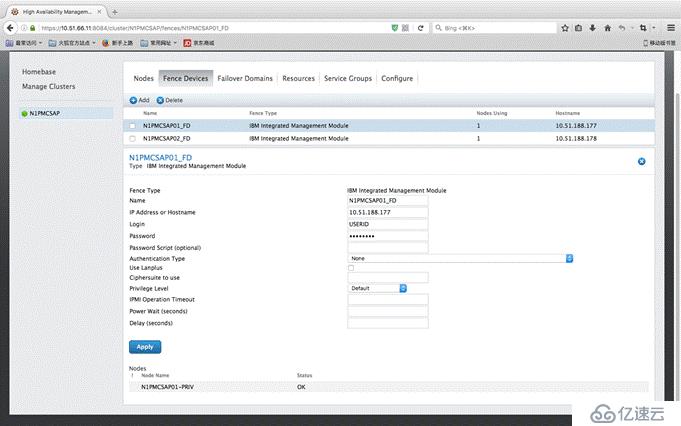

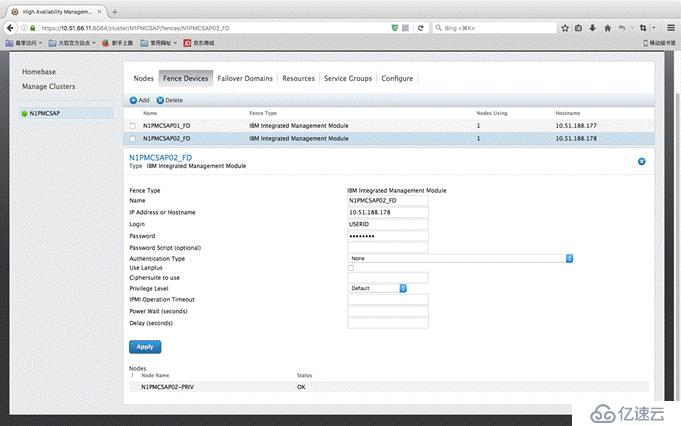

Fence Device配置 |

由于群集只有2節點,可能產生腦裂情況,所以在主機出現故障的時候,需要使用Fence機制仲裁哪臺主機脫離群集,而Fence最佳實踐就是采用主板集成的IMM端口,IBM稱為IMM模塊,HPE則為ILO,DELL為IDRAC,其目的是強制服務器重新POST開機,從而達到釋放資源的目的,圖中左上角RJ45網口為IMM端口

N1PMCSAP01 Fence設備設定參數,用戶名:USERID,密碼:PASSW0RD

N1PMCSAP02 Fence設備設定參數,用戶名:USERID,密碼:PASSW0RD

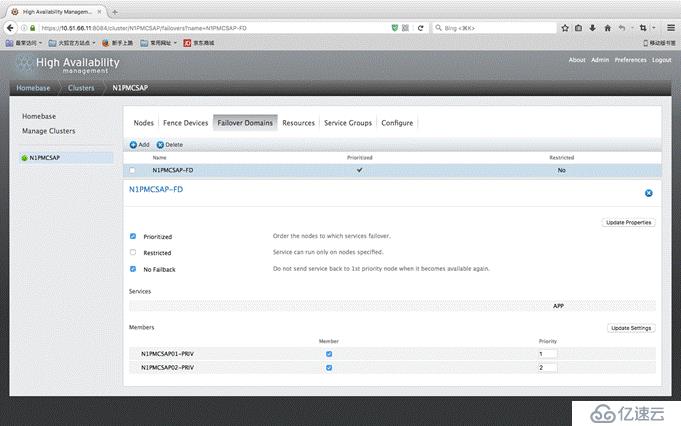

Failover Domain配置 |

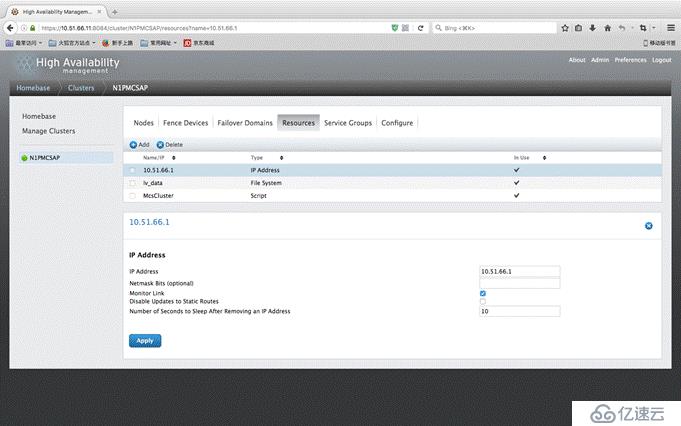

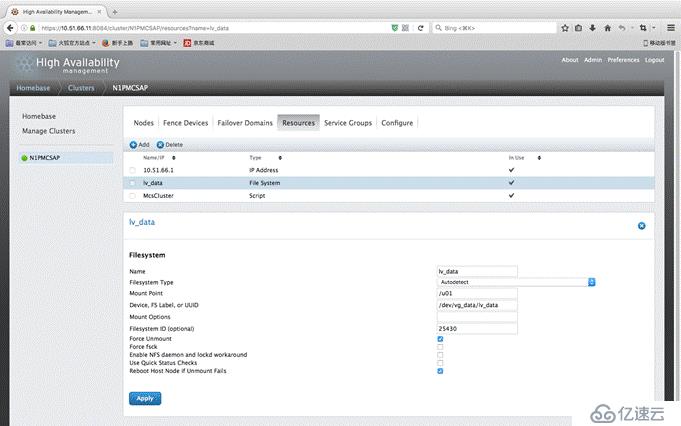

群集資源配置 |

一般來說某個應用程序都應該包含其依賴的資源,例如IP地址、存儲介質、服務腳本(應用程序)

群集資源配置中需要配置好各種資源的屬性參數,圖中10.51.66.1為IP資源,定義了群集應用程序的IP地址

Lv_data為群集磁盤資源,定義了磁盤的掛載點,及物理塊設備的位置

McsCluster則為應用程序啟動腳本,定義了啟動腳本的位置

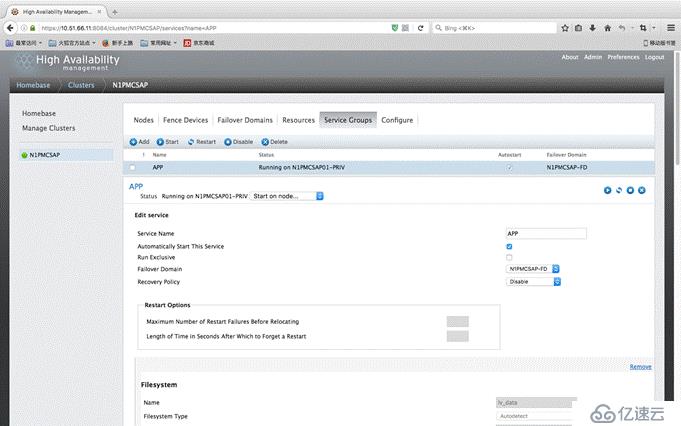

Service Groups配置 |

Service Group可定義一個或一組應用,包含該應用所需要的所有資源,以及啟動優先等級,管理員可在這個界面手動切換服務運行的主機,及恢復策略

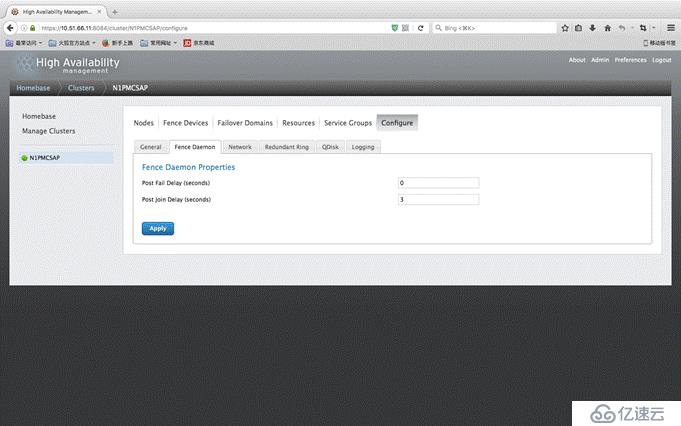

其他高級設定 |

為了防止群集加入時來回Fence,這里配置了Post Join Delay為3秒

查看群集配置文件 |

最后可通過命令查看群集所有的配置

#cat /etc/cluster/cluster.conf

[root@N1PMCSAP01 ~]# vi /etc/cluster/cluster.conf

<fence>

<method name="N1PMCSAP01_Method">

<device name="N1PMCSAP01_FD"/>

</method>

</fence>

</clusternode>

<clusternode name="N1PMCSAP02-PRIV" nodeid="2">

<fence>

<method name="N1PMCSAP02_Method">

<device name="N1PMCSAP02_FD"/>

</method>

</fence>

</clusternode>

</clusternodes>

<cman expected_votes="1" two_node="1"/>

<rm>

<failoverdomains>

<failoverdomain name="N1PMCSAP-FD" nofailback="1" ordered="1">

<failoverdomainnode name="N1PMCSAP01-PRIV" priority="1"/>

<failoverdomainnode name="N1PMCSAP02-PRIV" priority="2"/>

</failoverdomain>

</failoverdomains>

<resources>

<ip address="10.51.66.1" sleeptime="10"/>

<fs device="/dev/vg_data/lv_data" force_unmount="1" fsid="25430" mountpoint="/u01" name="lv_data" self_fence="1"/>

<script file="/home/mcs/cluster/McsCluster" name="McsCluster"/>

</resources>

<service domain="N1PMCSAP-FD" name="APP" recovery="disable">

<fs ref="lv_data">

<ip ref="10.51.66.1"/>

</fs>

<script ref="McsCluster"/>

</service>

</rm>

<fencedevices>

<fencedevice agent="fence_imm" ipaddr="10.51.188.177" login="USERID" name="N1PMCSAP01_FD" passwd="PASSW0RD"/>

<fencedevice agent="fence_imm" ipaddr="10.51.188.178" login="USERID" name="N1PMCSAP02_FD" passwd="PASSW0RD"/>

</fencedevices>

</cluster>

該配置文件在N1PMCSAP01和N1PMCSAP02兩臺主機中是保持一致的

RHCS群集使用方法查看群集狀態 |

使用Clustat命令查看運行狀態

[root@N1PMCSAP01 /]# clustat -l

Cluster Status for N1PMCSAP @ Fri May 26 14:13:25 2017

Member Status: Quorate

Member Name ID Status

------ ---- ---- ------

N1PMCSAP01-PRIV 1 Online, Local, rgmanager

N1PMCSAP02-PRIV 2 Online, rgmanager

Service Information

------- -----------

Service Name : service:APP

Current State : started (112)

Flags : none (0)

Owner : N1PMCSAP01-PRIV

Last Owner : N1PMCSAP01-PRIV

Last Transition : Fri May 26 13:55:45 2017

Current State為服務正在運行狀態

Owner為正在運行服務的節點

手動切換群集 |

[root@N1PMCSAP01 /]# clusvcadm -r APP(Service Group Name) -m N1PMCSAP02(Host Member)

#手動從節點1切換至節點2

[root@N1PMCSAP01 /]# clusvcadm -d APP(Service Group Name)

#手動停止APP服務

[root@N1PMCSAP01 /]# clusvcadm -e APP(Service Group Name)

#手動啟用APP服務

[root@N1PMCSAP01 /]# clusvcadm -M APP(Service Group Name) -m N1PMCSAP02(Host Member)

#將Owner優先級設定為N1PMCSAP02優先

自動切換群集 |

當服務器硬件出現故障時,心跳網絡不可達對方時,RHCS群集會自動重啟故障服務器,將資源切換至狀態完好的另一臺服務器,當硬件修復完畢后,管理員可選擇是否將服務回切

注意:

不要同時拔出兩臺服務器的心跳網絡,會造成腦裂

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。